Key TakeawaysMost MLR rework is preventable: quality failures almost always originate before content reaches the formal MLR review process.

MLR rework is a workflow design problem. The fix starts before submission. |

|---|

The MLR review process is where every promotional campaign in pharma earns its approval or goes back for revision. Before an HCP sales aid, patient-facing website, or disease awareness piece can be distributed, it must clear medical, legal, and regulatory scrutiny. In 2025, FDA issued over 200 enforcement letters related to prescription drug advertising and promotion, with a significant surge concentrated in the final quarter of the year, per a year-in-review analysis by King & Spalding. False or misleading risk presentations, inadequate fair balance, and unsubstantiated claims led the list of cited violations.

For most pharma marketing and MLR operations teams, the biggest driver of review delays is content cycling back through revision. Materials go back through review because issues that could have been caught earlier were not: claims without proper substantiation, incomplete fair balance, mismatched references. Each cycle adds days or weeks. The sections below outline where those revision cycles originate and what a well-structured pre-submission process looks like in practice.

Why Does the MLR Review Process Generate So Much Rework?

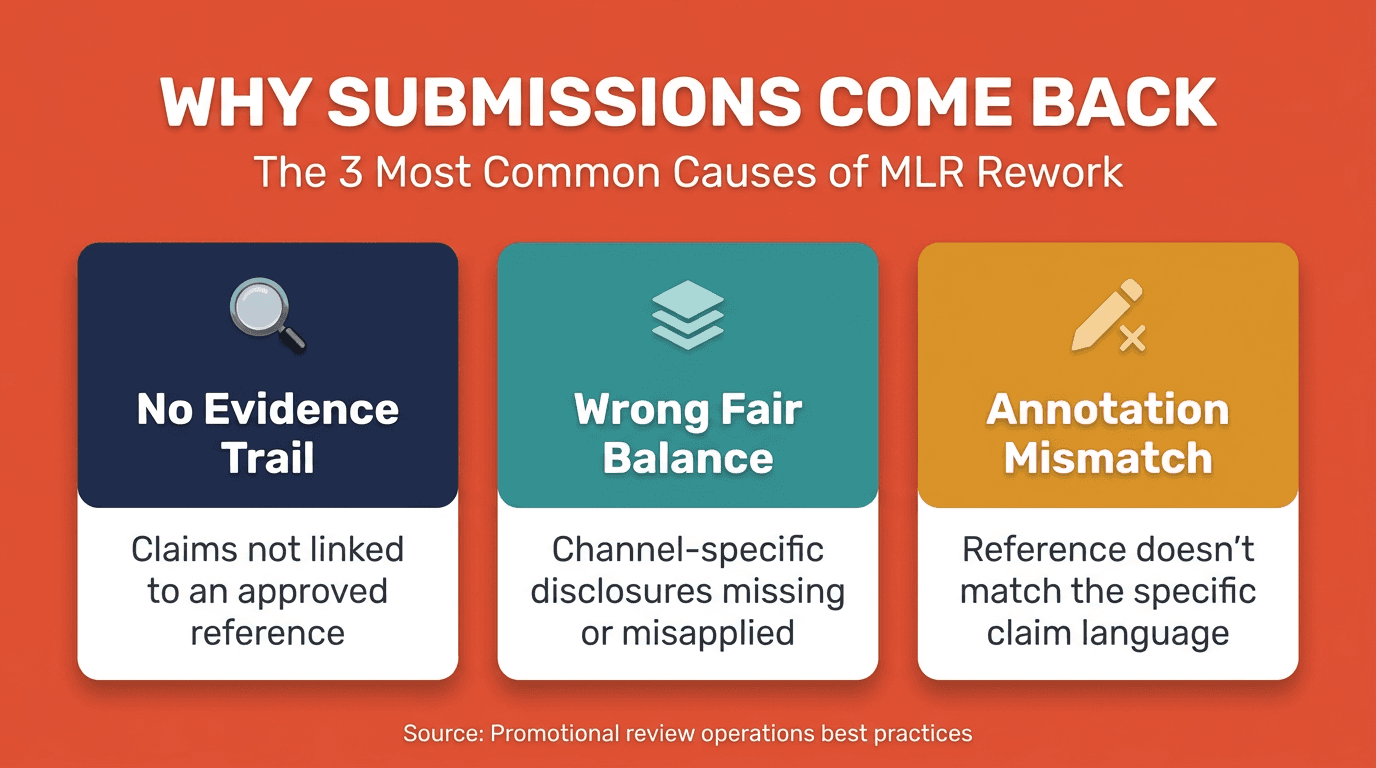

Most revision cycles are not random. They follow predictable patterns tied to specific gaps in how content is prepared before it enters the formal review queue. The root causes cluster consistently: claims that lack traceable substantiation, fair balance disclosures, reference annotations that do not match the copy they support, and brand or editorial inconsistencies that require legal resolution. Each is a pre-submission quality failure. Each is preventable.

Claims Without a Traceable Evidence Trail

A claim that cannot be traced back to an approved reference or on-label indication will not survive review. Claims are frequently drafted at speed, pulled from prior campaigns, or borrowed from field materials without confirming that the underlying substantiation still holds. When the regulatory reviewer cannot locate the supporting reference, the material goes back. A claims library with verified, current substantiation attached to every entry eliminates this failure mode. When content teams draw from a validated base, the evidence trail comes with the claim.

Fair Balance Gaps and Channel-Specific Disclosure Errors

Fair balance requirements vary by channel and format. A digital banner operates under different disclosure obligations than a journal ad or HCP sales aid. When teams apply a uniform approach across channels, reviewers flag the inconsistencies and the material goes back. The FDA's Office of Prescription Drug Promotion has consistently centered enforcement on risk presentation accuracy and fair balance completeness. Channel-specific standards need to be applied from the first draft, not corrected after reviewers identify the gap.

What Does Effective Pre-Submission Review Discipline Require?

Pre-submission review is a structured quality gate applied before content enters the formal promotional review committee. It covers the same categories formal review examines: regulatory compliance, claim substantiation, fair balance, editorial and brand standards, and channel-specific requirements. When consistently applied with defined criteria and clear ownership, it materially changes how often submissions clear on the first round. Reviewers encounter materials already screened for common failure modes and can spend their time on the substantive compliance decisions that require their expertise.

Reference Completeness and Annotation Accuracy

Every claim needs a corresponding reference annotated correctly to the specific language in the content. Annotation errors, from a mismatched page number to a reference that supports a narrower claim than what appears in copy, are among the most frequent triggers of revision requests. A pre-MLR review that walks the reference package systematically catches these before the formal queue.

Teams that track their own revision data by failure category can build pre-submission checklists around recurring failure modes rather than applying a generic standard. If a specific claim type has generated annotation disputes in prior cycles, that pattern belongs in the checklist.

Brand, Editorial, and Regulatory Consistency

Trademark inconsistencies, indication language that deviates from the approved label, and brand guideline violations all generate legal reviewer revision requests. None of these require regulatory expertise to catch. They require a documented standard and a consistent check. For pharma-focused agencies managing content across multiple brands, building this step into the promotional review workflow before submission prevents these issues from entering the MLR queue at all.

Common Rework Causes and Pre-Submission Mitigations

Rework Cause | Where It Originates | Pre-MLR Mitigation |

|---|---|---|

Missing or mismatched claim substantiation | Content authoring | Claims library with verified references; annotation review |

Incomplete fair balance for channel | Creative production | Channel-specific disclosure checklist before submission |

Trademark or brand guideline errors | Copywriting and layout | Editorial pre-check against brand and legal standards |

Indication language inconsistent with label | Claim drafting | Label comparison review prior to submission |

Reference annotations not matching copy | Annotation stage | Systematic reference-to-claim mapping review |

5 Disciplines That Reduce Revision Cycles and Drive Clean Approvals

Teams that consistently earn approval on first submission share a set of operational practices applied earlier in the content lifecycle. These five disciplines form the foundation.

Maintain a living claims library. Store approved claims with verified substantiation, indexed and accessible to content teams. When every piece draws from a validated base, the annotation burden at submission drops and the evidentiary trail is already built.

Define channel-specific fair balance standards. Document disclosure requirements for each format and build them into content briefs from the start. Applying fair balance after creative production, rather than before, is one of the most avoidable sources of revision cycles.

Formalize pre-submission review as a quality gate. Designate this step with defined ownership and a consistent checklist within your promotional review workflow. Track the issues it surfaces by category to identify recurring failure modes over time.

Measure MLR rework by failure type. Revision requests from the review process are data. Teams that aggregate reviewer comments by type, claim issue, fair balance gap, annotation error, brand inconsistency, can identify systemic gaps and measure whether workflow changes are reducing them.

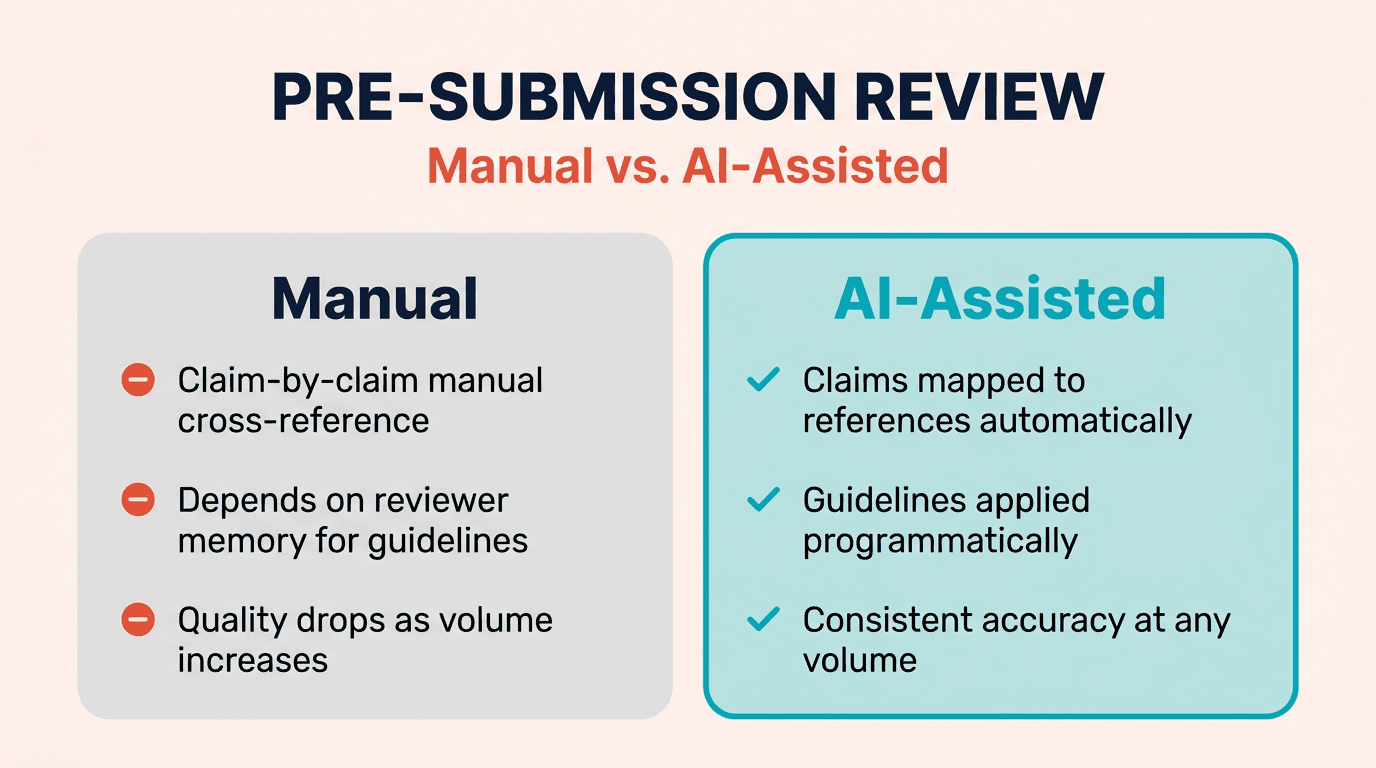

Use AI to surface issues before submission. AI-assisted tools can check regulatory compliance, claim substantiation, fair balance, editorial standards, and channel requirements at the front end of the review cycle. Human reviewers receive pre-triaged materials and focus on the substantive compliance decisions that require professional judgment.

Where Does Human Expertise Remain Essential in the MLR Review Process?

AI-assisted review changes what reviewers spend their time on. It does not change who holds responsibility for the compliance determination. Human judgment is required because compliance decisions involve interpretation, regulatory context, and risk assessment that cannot be fully automated. A regulatory reviewer must assess whether a supporting reference is appropriately scoped for the specific indication. A legal reviewer weighing off-label risk draws on institutional knowledge. A medical reviewer assessing risk presentation applies clinical understanding to the totality of the piece, not just whether individual elements are technically present.

The productive model is AI as a pre-triage layer: surface-level issues caught at scale before reviewers encounter the material. That shift, from detecting preventable errors to evaluating substantive compliance questions, is where efficiency gains occur without compromising review quality. As pharma teams produce more content across more channels, AI-assisted claims verification and fair balance checks allow reviewer capacity to sustain consistent quality across a higher submission volume.

Pre-Submission Quality Check: Manual vs. AI-Assisted

Review Dimension | Manual Quality Check | AI-Assisted Quality Check |

|---|---|---|

Claim substantiation check | Reviewer cross-references each claim manually | AI maps claims to references; flags gaps automatically |

Fair balance completeness | Checked against documented or remembered standard | Checked systematically across all five MLR categories |

Brand and editorial consistency | Depends on reviewer familiarity with guidelines | Applied programmatically against brand standards |

Performance under high volume | Quality and speed degrade as submissions increase | Consistent accuracy regardless of volume |

Rework pattern visibility | Tracked manually, if at all | Categorized and aggregated by issue type |

FAQ

What is the difference between a pre-submission quality check and the formal MLR review process?

A pre-submission quality check is an internal review conducted by the content or agency team before a piece enters the formal promotional review committee, designed to catch preventable errors such as missing references, incomplete fair balance, and brand inconsistencies. The formal MLR review process is the official cross-functional evaluation by medical, legal, and regulatory stakeholders that determines whether content can be approved for distribution.

How are first-time-right approvals typically measured?

Clean first-submission approvals are typically tracked as the percentage of submissions that receive full approval without a revision round, often segmented by reviewer function or content type. Establishing a baseline and tracking it over time shows whether upstream workflow changes are actually reducing revision cycles.

Does AI reduce the role of human reviewers in pharma promotional review?

No. AI-assisted tools function as a pre-triage layer, surfacing surface-level compliance issues before materials reach human reviewers. The compliance determination remains the responsibility of medical, legal, and regulatory professionals. What changes is how reviewers spend their time: less on detecting preventable errors, more on the substantive compliance analysis that requires professional judgment.

How does a structured promotional review workflow reduce risk under increased FDA scrutiny?

A 2025 enforcement review by Covington & Burling documents that FDA's enforcement letters concentrated heavily on misleading risk presentations and insufficient fair balance, with the largest surge issuing in fall 2025. A structured promotional review workflow that includes channel-specific fair balance standards and pre-submission reference verification addresses exactly those failure modes.

Building a Process That Earns Approval the First Time

The MLR review process is rigorous by design. For most operations leaders, the question is not whether that rigor is appropriate but whether the internal processes feeding into it are functioning at a comparable level. Most revision cycles trace back to the same upstream gaps: claims without traceable substantiation, fair balance that does not account for channel, and references that do not match the copy. These gaps are not inevitable. They respond to structural fixes.

A genuine pre-submission quality gate with defined criteria and real accountability is what separates teams that reliably achieve first-time-right approvals from those absorbing the cost of avoidable submission errors. As FDA enforcement intensity continues to rise, the operational stakes attached to that discipline are only increasing.

Revisto was built to support this kind of upstream quality discipline across the full MLR review process, covering all five review categories: regulatory compliance, claim substantiation, fair balance, editorial and brand standards, and market compliance. Learn more about how Revisto works, or connect with the team to see AI-assisted pre-submission review in practice.